The hype is real around Generative AI. You may be wondering what that complicated, futuristic-looking word actually means, but also how it actually works.

For those who (like me) didn’t know anything about it, it refers to the unsupervised and semi-supervised machine learning algorithms that enable devices to use existing content like images, audio, text, videos, or even code to create new possible content. The main idea is to generate original artefacts that would look real and all of that, thanks to data

Still confused? Let me give you a simple example for better understanding: I decided to try generating content on a Generative AI platform (the now famous Dall-E-2) and typed ´lemons´ and ´customer support´, I waited for a few seconds and here I am scrolling through generated pictures and videos of lemons wearing headsets or lemons answering the phone. I had never seen anything like it before!

However, it isn’t as simple as it looks.

Generative AI algorithms require an enormous amount of training data to perform tasks because it combines content that it already knows which is primarily conducted through: data annotation. It refers to an essential process of labelling data to make it usable for artificial intelligence systems, particularly those using supervised or unsupervised machine learning. In other words, data annotation trains artificial intelligence through machine learning, cropping, categorizing and labelling large amounts of datasets comprising all types of content: images, videos, audios, texts…

Without data labellers, artificial intelligence just wouldn’t exist.

What I also aim to say is that there isn’t any artificial conscience (yet?). Generative AI does not create a piece of art because it knows it’s pretty or because of the pretty colours, no, it only creates content based on data it understands, thus formulating connections between those concepts. And all this is possible thanks to annotation.

However, here come the risks.

Have you ever heard of the ‘salmon fish swimming in the river’? Or maybe you’ve come across the picture on the right on social media platforms’

In this picture, you can no doubt observe a salmon fish ‘swimming’ in the river. Yet, the state of that poor salmon was not clearly understood by the Generative AI. You can thus see a sashimi good looking piece of salmon floating in a river.

So, should we really trust generative AI? Can it be reliable?

Here comes again the importance of quality annotation. It does not suffice to conduct superficial annotation and expect the Generative AI to develop and become more efficient. No, quality annotation feeds quality Generative AI, consequently, with more data annotations, the ‘salmon incident’ or even worse could be avoided.

In fact, neural networks have the power to do incredibly important things.

Let’s take the example of when an army used neural networks to distinguish between camouflaged tanks and plain forests. The issue was that pictures of the tanks were taken on cloudy days, as for the pictures of the forest, they were taken on sunny days. The neural network was now able to distinguish between sunny and cloudy days instead of the presence of tanks. It had learned the incorrect way to differentiate the two.

Nonetheless, no matter the mistakes, it cannot be disputed that Generative AI has applications in many fields, including marketing, education, healthcare, and entertainment.

Still in doubt when it comes to the power of Generative AI? What if I told you that Generative AI could now detect blindness due at an early stage in diabetic patients?

In fact, diabetics can experience several adverse long-term effects, one being retinopathy. It refers to the progressive eclipsing of a patient’s retina to the point of vision impairment. Generative AI-based applications can thus evaluate millions of images of retinopathy-infected patients before it generates brand-new datasets to cover every scenario. These datasets will also show what patients’ early-stage retinopathy looks like. Once this is accomplished, ophthalmologists can take preventive actions to treat patients’ diabetic retinopathy, if not completely eradicate it.

The Generative AI technology, as observed in healthcare, can generate a vast majority of outcomes thanks to the data provided to it. This functionality is essential for healthcare, as human and animal bodies contain several complexities that require the usage of existing data to create new ones.

You may now have realized that machine learning algorithms need tens of thousands of datasets in order to carry out different tasks. It also needs accurate data annotations. How dangerous would it be to experience mistakes in the healthcare department for example?

As seen generative AI can be used in various fields, including arts. The continuing project “Artificial Natural History” (2020), which examines speculative, artificial life through the prism of a “natural history book that never was” by Sofia Crespo, is an intriguing example of modern AI being utilized to create art.

The author created distorted series of creatures with imaged features that require new sets of biological classifications. Art like that can play with the endless diversity of nature of which we have limitless awareness.

On the left, you can find examples of AI-generated creatures in Artificial Natural History.

When a singer passes away, fans always hypothesize about the music that would have been made had he/she lived. Sometimes, new songs even appear after the death of a singer such as ‘Face it Alone’ (2022) by Queen featuring unearthed Freddie Mercury vocals 31 years after his death.

What if we could hear their voices, no matter the year, with actual new songs? What if we could hear, Freddy Mercury, Amy Winehouse, Jimi Hendrix, Kurt Cobain, Michael Jackson, Whitney Houston, Prince, Elton John and so many more still in 2022?

What if it was actually possible? No… what if it already happened?

An organization has created a ‘new’ Nirvana song ‘Drowned in the Sun’ using artificial intelligence software to approximate the singer-guitar’s songwriting. Other than the vocals, the work of Nirvana tribute band frontman Eric Hogan, the song’s creators admit that everything on the song is the work of a computer. ‘Lost Tapes of the 27 Club’ is a project featuring songs written and performed by machines by musicians who died at 27: Jimi Hendrix, Amy Winehouse, Jim Morrison… Each song is the result of a machine analyzing 30 songs by each singer and studying the tracks’ vocal melodies, guitar riffs, chord changes, lyrics, drum patterns and so many more to guess what their new compositions would sound like.

Below are some examples of AI-created songs

For more information visit this website.

There are several use cases of Generative AI. It can create brand-new content using existing datasets, leading the way for machine learning in the future when the value of data rises even further than today.

Others believe that the biggest opportunity in Generative AI will be language.

AI-powered text generation will create many orders of magnitude more value than will AI-powered image generation in the years ahead. Machines’ ability to generate language—to write and speak—will prove to be far more transformative than their ability to generate visual content.

Rob Toews, Partner at Radical Ventures Tweet

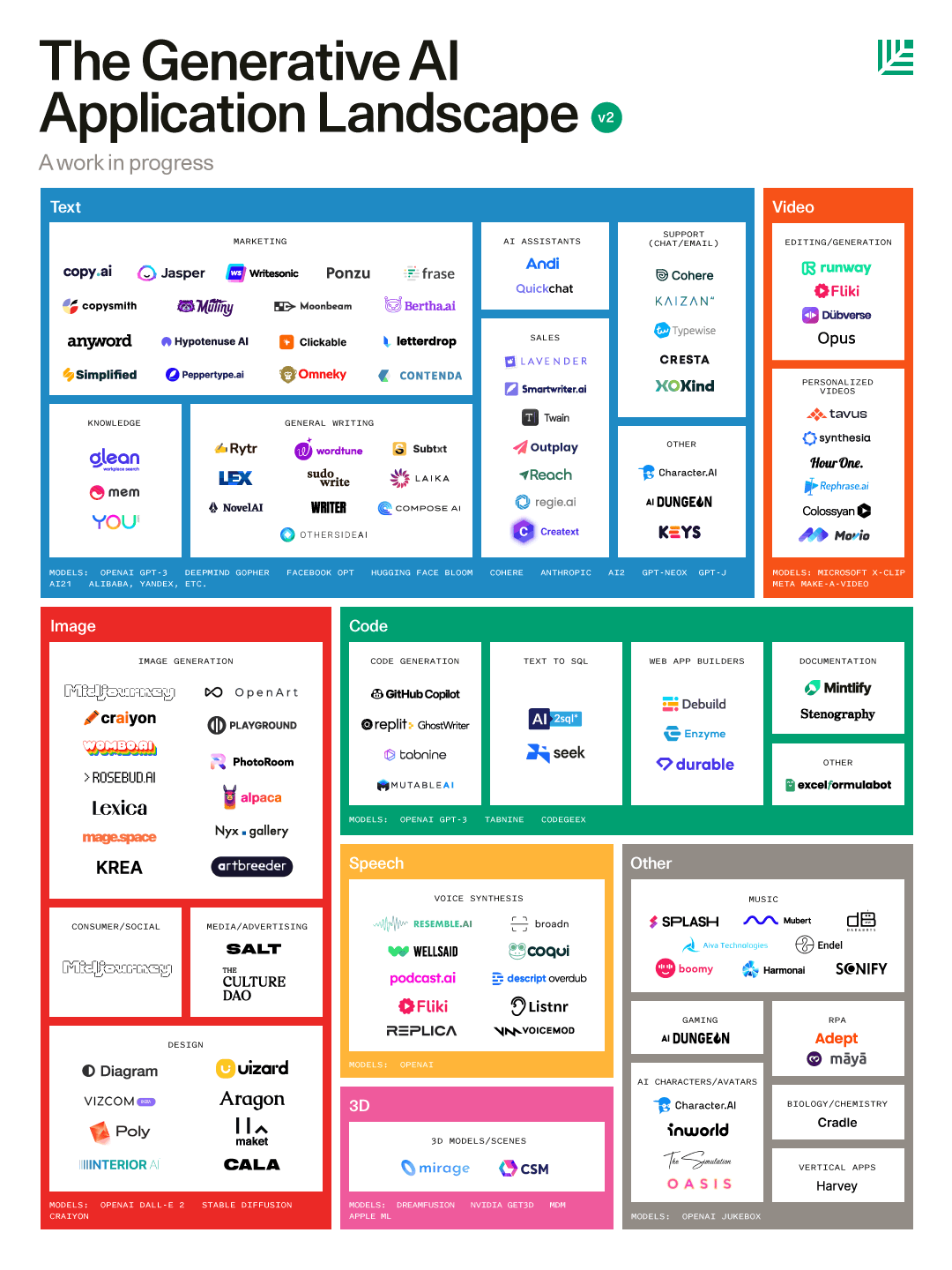

The Generative AI application landscape keeps on increasing with an expected CAGR of 19.8% from 2022 to 2027 (Imarc, 2022) and new players building the Generative AI future are constantly emerging thanks to the availability of open-source tools and APIs, that have enabled them to manage all types of content, whether text, video, code, 3D or other. E.g. in the landscape below by Sonya Huang General Partner at Sequoia Capital, which also published a detailed report on the topic.

You may have uncovered that Generative AI can in fact create any content. Yet, it is important to still discuss ‘grey zones’. Today, humans and machines are not competing in the same sphere such as art for example, humans have small subconscious pots which hold their limited experiences of their life compared to AI systems which hold massive pots. AI systems hold the knowledge and data of billions of human beings, thus, leading to unequal knowledge.

As such, it could be easy to say that content created by a generative AI can be separated from the work created by a human, but not necessarily. Human beings are now able to produce part or polish their work with the help of AI which leads us again to the ‘grey zone’. Is it content created by a human or by a machine?

Several companies have been working on designing systems which can be used to deal with grey zones and misinformation. The goal is to ensure that creatives are recognized for their work and that people can understand the origins and methods used to produce the content.

So, the term ‘Responsible AI’ came into existence, where systems put in place allow anyone to know where each piece of digital content comes from and if generative AI was involved or not.

Artists will remain artists no matter the development of generative AI or other. There is still a long way to go for machines to take over our creativity and emotions (hopefully)!

We’re the outsourcing partner that can help you annotate, label, segment and enrich your data to build and train your artificial intelligence solutions: